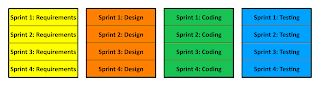

Perhaps that is a bit harsh, but in the question of whether Agile or Waterfall is best, the reality is that Agile ends up being multiple small waterfall cycles. So you end up doing Waterfall in pieces.

All projects are composed of a set of tasks, typically executed by multiple people. Within your set of tasks, some are dependent on others. In particular we can never get away from the fact that to implement any single requirement, we need to analyse the requirement (what), design the solution (how), code the solution (execute), and test the result.

So any iterative and incremental project, i.e. Agile, Scrum, RAD, XP, etc. Is really a series of small waterfalls. The difference is that between the waterfalls we have sandwiched requirements, analysis, design, and coding which gives us the ability to change direction when we need to.

Note: If you never need to change direction (which is not often :-)) then Waterfall is a viable option. Waterfall makes the most sense in projects where there is little to no requirements or technical uncertainty*, e.g. reimplementing a system with experienced developers in a new technology where the use cases for the previous system are not changing.

The typical waterfall project assumes the above picture, that all requirements can be done then all design all coding and then all testing. It is based on a 1960's factory mentality that code can be assembled like a car on an assembly line.

Waterfall only works if the amount of rework in any phase is immaterial and does not materially affect the length of the project. Typically this is not true. In the presence of requirements uncertainty, requirements need to be revisited many times. Often, missing or inconsistent requirements will cause scope to change requiring change requests in the process.

All change requests will affect the project schedule.

In the presence of technical uncertainty, design needs to be revisited many times. Often when using newer APIs the API is not documented or even worse, the code mechanism from the creator/vendor simply does not work and requires technical work arounds that materially affect the project schedule.

This will create a change request that will affect the project schedule.

An agile project builds requirements and design into every sprint. This allows you to change directions when either requirements or technical decisions are uncertain.

This is the series of small waterfalls -- you are still doing Waterfall, but in an Agile way.

When you add up all the sprints of a project, you will realize that you have done the same project (i.e. the work is the work), but you have sliced it up differently.

So it is not a question of whether you will be doing Waterfall or not. It is a matter of what is the sprint cycle that will support the requirements and technical uncertainty of your project. If your project has little to no uncertainty, then your sprint cycle is the entire project.

If your project has requirements or technical uncertainty, then sprint cycles of 2-3 weeks are ideal.

*Note: Similar to requirements and technical uncertainty are projects where there are significant dependencies on client interaction, so schedule uncertainty can also drive the need for agile project management.

Other articles in the "Loser" series

Want to see more sacred cows get tipped? Check out

Make no mistake, I am the biggest "Loser" of them all. I believe that I have made every mistake in the book once :-)